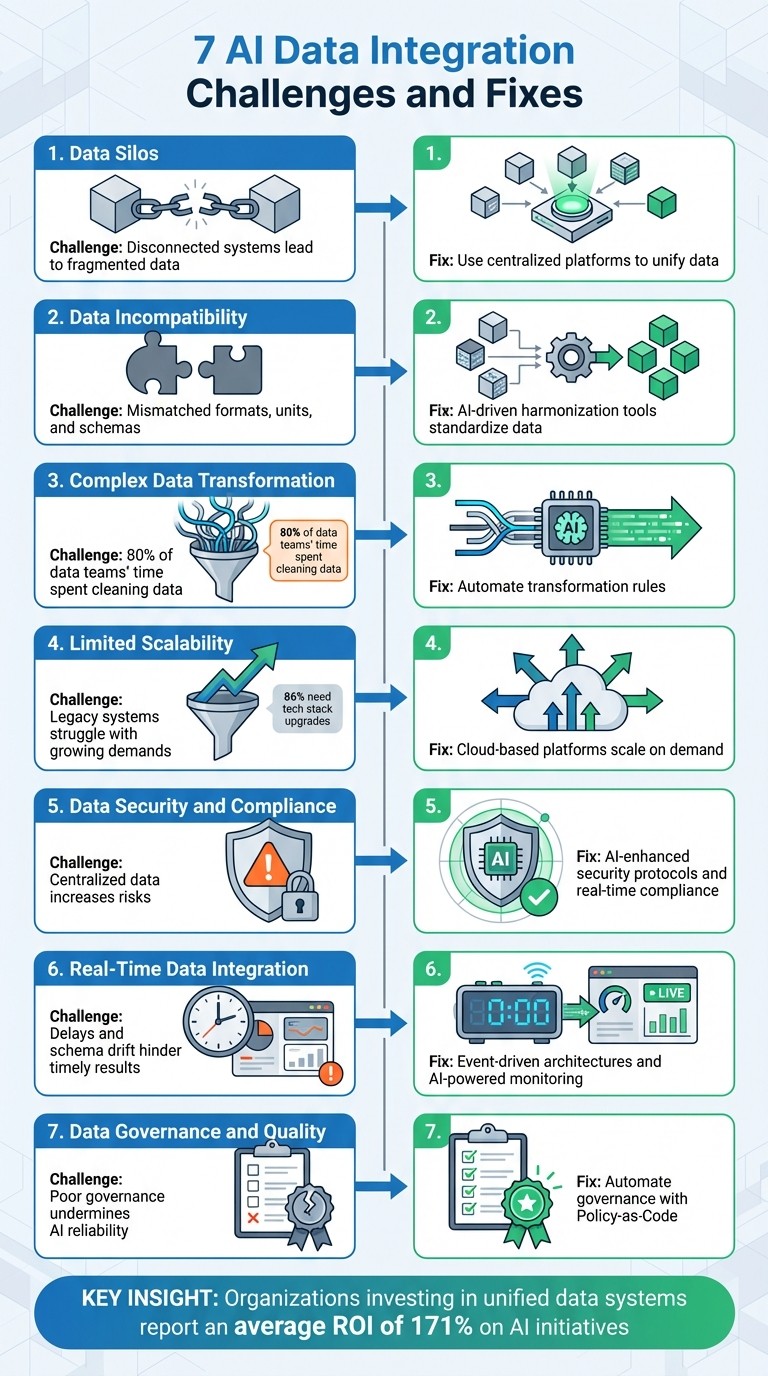

7 AI Data Integration Challenges and Fixes

Fix seven common AI data integration problems—data silos, schema drift, security, scalability, real-time pipelines, governance, and complex transformations.

Data integration is the backbone of successful AI implementation, but it's riddled with challenges that can derail projects. Here are the seven most common obstacles organizations face and actionable fixes to overcome them:

Data Silos: Disconnected systems lead to fragmented data and inconsistent insights.

Fix: Use centralized platforms to unify data across systems.

Data Incompatibility: Mismatched formats, units, and schemas disrupt workflows.

Fix: AI-driven harmonization tools standardize and resolve inconsistencies.

Complex Data Transformation: Cleaning and preparing raw data consumes 80% of data teams' time.

Fix: Automate transformation rules to streamline processes.

Limited Scalability: Legacy systems struggle with growing data demands.

Fix: Cloud-based platforms scale resources on demand.

Data Security and Compliance: Centralized data increases risks and regulatory challenges.

Fix: Implement AI-enhanced security protocols and real-time compliance checks.

Real-Time Data Integration: Delays and schema drift hinder AI's ability to deliver timely results.

Fix: Use event-driven architectures and AI-powered monitoring for real-time updates.

Data Governance and Quality: Poor governance and inconsistent definitions undermine AI reliability.

Fix: Automate governance with tools like Policy-as-Code and maintain clear data lineage.

Key Insight: Addressing these challenges not only improves AI reliability but also reduces wasted effort, enabling teams to focus on impactful tasks. Organizations that invest in unified data systems and automation report an average ROI of 171% on AI initiatives.

7 AI Data Integration Challenges and Solutions Overview

Closing the AI readiness gap with better data integration

Challenge 1: Data Silos

Data silos occur when different departments use separate systems that don’t communicate effectively. Picture this: marketing keeps customer data on one platform, sales uses another, and finance relies on spreadsheets. The result? A fragmented system that hinders collaboration.

Legacy on-premise systems with proprietary protocols and sudden integrations - like those resulting from mergers - widen this gap. These architectural divides block AI models from accessing a full range of structured and unstructured data, leaving them with an incomplete picture.

The consequences for AI are serious. Models trained on fragmented data work with partial truths, much like trying to make decisions with only half of a market analysis. A staggering 80% of data scientists’ time is spent cleaning scattered data instead of building models [7]. Even worse, only 29% of organizations say their architecture fully connects AI to their entire business data ecosystem [8]. This leads to inconsistencies - imagine finance dashboards showing different revenue numbers than sales reports. Such discrepancies can erode leadership’s trust in AI-generated insights. Additionally, isolated systems make it harder to enforce consistent security policies, which are essential for compliance with regulations like GDPR or HIPAA.

"AI cannot thrive in an environment where data remains isolated across departments and systems" [7].

Breaking down these silos is essential, and centralized data platforms provide a practical solution.

Fix: Centralized Data Platforms

To tackle data silos, organizations need centralized data platforms. These platforms use standardized connectors to translate various native formats - whether from legacy SOAP APIs or modern cloud databases - into a unified structure before feeding data into AI models [3][4].

A compelling example comes from Coca-Cola Europacific Partners. In June 2024, the company dismantled data silos during a procurement transformation. By integrating data across operations and customer systems, they achieved over $40 million in business benefits, including $5 million in annual cost savings and avoidance. This was all thanks to deeper insights enabled by consolidated data access.

Modern solutions like data virtualization and lakehouse architectures provide unified views across cloud, on-premises, and edge systems. They combine flexibility with governance, ensuring AI models have the robust support they need [3]. Automated data profiling tools add another layer of efficiency by identifying issues - like schema drift, null spikes, or duplicate keys - before they affect AI models [3]. Finally, conducting a thorough audit of data sources and ownership can help organizations prioritize integration efforts, focusing on key systems like CRM and ERP while phasing out outdated legacy solutions.

Challenge 2: Data Incompatibility

Even when data isn’t locked away in silos, it often comes in formats that just don’t play well together. One system might store names in a different order than another. Sales platforms might log revenue in dollars, while marketing tools track budgets in cents. And don’t even get started on legacy systems - many of them rely on outdated SOAP APIs or proprietary protocols that clash with the REST-based requirements of today’s AI tools [3][4].

These mismatched formats create serious headaches. For example, 86% of enterprises report needing to upgrade their tech stacks, and 42% rely on eight or more data sources to power their AI efforts [4]. When schemas don’t match, AI models are forced to grapple with inconsistent data, which often leads to errors throughout workflows. It’s no small issue - 80% of AI projects fail due to data quality and integration challenges [2]. Even seemingly minor differences, like naming conventions or date formats, can cause cascading failures when AI agents attempt to execute complex, chained actions. Tackling these incompatibilities head-on is essential for smooth AI operations.

"Seamless access to high-quality, well-structured data is the fuel for any AI engine. Yet most enterprises operate across fragmented systems... Bridging these disparate environments in real time is not just a technical task - it's a strategic necessity." - AIJ Thought Leader, AI Journal [1]

These challenges make it clear: intelligent, automated data harmonization is no longer optional - it’s a must.

Fix: AI-Driven Data Harmonization

This is where machine learning steps in to save the day. Advanced models can automatically detect and resolve format mismatches before the data even hits your AI systems. Forget about patchwork solutions like point-to-point scripts; instead, consider unified data layers that capture real-time updates and translate various formats into consistent, standardized feeds [3].

Modern harmonization tools are designed to catch schema changes early. For example, if a team upstream renames a field or adds new columns, automated profiling can flag the issue immediately. This gives teams the chance to update field mappings before the AI models encounter errors [3]. Standards like the Model Context Protocol (MCP) are also making life easier by reducing the need for custom connectors, streamlining how models integrate with enterprise tools and data [5]. Plus, small language models (SLMs) are proving to be an efficient option for handling straightforward tasks like data extraction and transformation, offering a cost-effective alternative to larger models [5].

To further reduce risks, deploy middleware and versioned APIs with clear naming conventions - think customer_id or invoice_total. These contracts ensure that breaking changes trigger automated tests instead of silently causing failures. Pre-built connectors for popular platforms like SAP and Zendesk also simplify authentication and schema translation [3][4]. By adopting these harmonization strategies, you’ll set the stage for AI systems to access consistent, reliable data across your organization.

Challenge 3: Complex Data Transformation

Raw data is almost never ready to use straight out of the gate. Before AI models can perform, data teams have to roll up their sleeves to clean up incomplete records, fill in missing fields, and ensure the data is in a consistent format. In fact, 80% of their time is spent on building connectors and cleaning data instead of actually training AI models [3][4].

This process isn't just tedious; it creates inefficiencies. Data is often scattered across CRMs, ERPs, and outdated databases that don’t share a common structure. Teams frequently rely on ad-hoc CSV exports and manual merging, which introduces avoidable issues like typos, mismatched timestamps, or missing columns [3]. To make matters worse, departments often define key terms differently - think "revenue" - leading to semantic conflicts that reduce AI accuracy [9]. These kinds of issues not only erode trust in AI outputs but also stretch timelines, sometimes even causing projects to be scrapped altogether.

Then there are the infrastructure headaches. Filling integration gaps with quick-fix scripts might work temporarily, but these solutions create technical debt. When vendors release updates or modify APIs, those fragile scripts can break, derailing workflows [3].

"Without a strong integration backbone, AI becomes a house built on sand - promising in theory, unstable in reality." – AIJ Thought Leader, AI Journ [1]

The good news? Automated, AI-powered transformation tools can help solve these challenges.

Fix: Automated Transformation Rules

Automating data transformation can take much of the heavy lifting off your team’s plate while ensuring your data pipelines are clean and reliable. AI-enabled tools now handle tasks like profiling and validating data. They flag discrepancies, duplicates, or spikes in missing values early - stopping bad data from ever reaching your AI models [3][9]. This proactive approach helps avoid the classic "garbage in, garbage out" problem that plagues AI training.

Pre-built connectors for popular platforms simplify authentication and schema translation, cutting out the need for custom development [3]. For tasks like data extraction or classification, Small Language Models (SLMs) provide an efficient solution. They’re highly effective for deterministic tasks and come with the added benefits of lower inference costs and reduced energy consumption compared to their larger counterparts [5]. Additionally, applying practices like version control and automated testing to your transformation rules ensures data pipelines remain error-free, treating them with the same care as production code [9].

Another key step? Establishing a business glossary. By creating a shared understanding of terms like "customer" or "revenue", semantic conflicts between departments can be resolved. When everyone works from the same definitions, transformation rules can be applied consistently across the organization. Documenting these rules centrally through metadata management ensures that as systems evolve, your AI models always receive clean, standardized data. This consistency is critical for delivering the actionable insights needed to drive strategic decisions powered by AI.

Challenge 4: Limited Scalability

Traditional systems might work for smaller AI projects, but they quickly hit their limits as data volumes grow and new sources emerge. A striking 86% of enterprises report needing major upgrades to their tech stacks just to deploy AI agents effectively [4]. The problem goes beyond storage - it includes processing speeds, network delays, and the heavy manual workload of maintaining these systems.

Legacy systems are often at the heart of scalability issues. Many rely on outdated infrastructure, like SOAP APIs, that simply can't keep up with the real-time, high-demand requirements of modern AI agents [3][4]. These systems struggle to handle unpredictable workload spikes, leading to performance hiccups. Add to that the challenge of integrating multiple data sources, and you’ve got bottlenecks that slow everything down. These bottlenecks not only hurt performance but also make scaling up a much bigger challenge as AI deployments expand.

Custom connectors add another layer of complexity. While building custom integrations might work for a few AI agents, it’s not sustainable as deployments grow. A single API update from a vendor could disrupt multiple agents at once [4]. As organizations experience "agent sprawl", managing tasks like identity tracking, secret rotation, and version control becomes unmanageable. In fact, 67% of data engineering resources in centralized organizations are spent maintaining pipelines rather than driving innovation [6].

"Integration is no longer an IT backend concern; it's a frontline enabler of business value." – AIJ Thought Leader, AIJ [1]

Scalability issues also arise in multi-cloud environments. Platforms like AWS, Azure, and Google Cloud each come with their own security models and data formats, adding complexity to integration efforts [9]. Network latency and unpredictable data transfer costs further complicate planning and budgeting, making it tough to scale AI deployments in a cost-efficient way. Addressing these challenges is critical for creating a reliable, scalable AI integration strategy across an organization.

Fix: Cloud-Based AI Platforms

The solution? Cloud-based AI platforms. These platforms are built to handle scalability challenges from the ground up. Instead of relying on rigid, monolithic systems, they use microservices and containerization to scale horizontally - adding resources exactly where and when they’re needed [1]. Containers also offer benefits like repeatable builds, quick rollbacks, and precise resource allocation, so you’re not wasting money on idle infrastructure [3].

Cloud platforms also streamline integration. With event-driven coordination and unified data layers, they eliminate the need for fragile, custom integration code. Pre-built connectors can automatically translate data into a consistent format, while message queues buffer traffic spikes, ensuring processing only kicks in when new data arrives [3][4]. This setup smooths out rate-limit issues, reduces idle costs, and makes it easier to integrate multiple data sources.

Governance becomes simpler, too. Automated monitoring can flag issues like token expiration, schema changes, or latency spikes before they disrupt users [3]. When managing hundreds of AI agents, this kind of proactive oversight is essential.

Challenge 5: Data Security and Compliance

Centralizing sensitive data creates an attractive target for cyber threats. In fact, 45% of leaders cite security vulnerabilities as a major risk when adopting agentic AI, and 43% are primarily concerned about AI-specific cyberattacks [5]. This pressure is even more intense in industries like healthcare, finance, and retail, where regulations such as GDPR and CCPA demand strict oversight of data - how it's accessed, stored, and transferred.

The problem is compounded by outdated practices like static roles and shared API keys, which expand the attack surface. A single compromised credential can jeopardize entire data repositories. Manual compliance checks can't keep up with the speed of real-time data operations, leaving organizations exposed. When AI agents autonomously pull data from multiple sources, 62% of practitioners identify security as their biggest deployment challenge [4]. The challenge isn’t just avoiding scrutiny - it’s proving, in real time, that every data movement complies with regulations.

Cross-border cloud operations add another layer of complexity. Data residency laws vary by region, and even something as simple as sending a prompt to a model hosted in the wrong jurisdiction can result in violations. Without automated lineage tracking, answering basic audit questions becomes a nightmare: Where did this data come from? Who accessed it? What transformations were applied? These questions aren’t theoretical - they’re core to frameworks like the EU AI Act, which mandates documented risk classification and traceability by August 2026 [5]. To meet these demands, organizations need systems that monitor and enforce compliance as data flows across platforms.

Fix: AI-Enhanced Security Protocols

AI-driven security protocols automate tasks that once required entire compliance teams. Tools like Policy-as-Code (e.g., Open Policy Agent or Rego) convert legal mandates - such as GDPR's right-to-erasure or CCPA's opt-out rules - into executable, real-time policies [3]. Instead of relying on periodic audits, these systems validate every data interaction against compliance rules before it happens. Encryption is no longer an afterthought: TLS 1.3 secures data in transit, while AES-256 protects data at rest [3].

Here’s how AI-enhanced security compares to traditional methods:

AI-enhanced compliance tools create tamper-proof audit trails by tagging data with metadata at every stage - whether during ingestion, transformation, or retrieval. This allows auditors to trace a single record through every AI agent, API call, and transformation rule. Automated reasoning systems use formal logic to ensure that AI outputs align with predefined safety parameters, catching errors or hallucinations before they affect downstream processes.

Identity management also gets a major upgrade. Instead of shared keys, AI agents use service accounts with minimal permissions. Each agent is restricted to the exact access it needs - separating "read" and "write" privileges - so if one agent is compromised, its access can be revoked instantly without affecting others [5]. Passwords are replaced with modern authentication methods like passkeys, rendering phishing attacks obsolete. For critical actions, such as data deletions or financial transfers, human-in-the-loop controls provide an extra layer of oversight [5].

"Security 2.0 starts when you assume the prompt is an attack surface and the tool layer is a privilege escalation path." – ViitorCloud [5]

The benefits go beyond compliance. Platforms with built-in certifications can automatically generate audit-ready reports - including logs, encryption details, and lineage documentation - cutting what used to take months down to mere clicks. This not only satisfies regulators but also positions security as an operational strength, enabling faster AI adoption without compromising on rigor or trust.

Challenge 6: Real-Time Data Integration

Real-time integration brings its own set of hurdles, especially when AI needs to respond instantly. The effectiveness of AI models heavily depends on the quality and timeliness of the data they receive. In industries like finance, logistics, and e-commerce, outdated data can derail quick, accurate decision-making. Unfortunately, many legacy systems weren't designed with real-time synchronization in mind. Older ERPs and procurement databases often rely on outdated protocols like SOAP or lack modern APIs, forcing teams to rely on batch exports that may lag hours - or even days - behind actual events.

Latency issues add another layer of complexity. Distributed systems managing large volumes of data can experience network reliability problems, leading to timeouts during high-demand periods. Standard APIs often have undocumented rate limits or non-idempotent endpoints, which can break down when autonomous AI agents retry calls rapidly without human oversight. These delays leave AI models working with incomplete or outdated information, undermining their effectiveness.

Then there's the issue of schema drift. This happens when upstream systems make unannounced changes, like renaming fields or adding columns, which can silently disrupt downstream AI processes. For instance, if a database changes "customer_id" to "cust_ID", it could break an entire real-time pipeline without anyone noticing immediately. Without automated tools to detect these changes, schema drift can lead to inaccurate AI outputs. Ensuring consistent data across multiple streaming sources requires robust error-handling systems; otherwise, conflicting or incomplete records can compromise the insights AI generates.

Fix: AI-Powered Streaming and Monitoring

Event-driven architectures can address latency issues by processing data as events occur. Tools like Apache Kafka enable low-latency streaming, while Change Data Capture (CDC) mechanisms track updates in real time. This means AI models can receive only the most relevant updates - like new orders, inventory changes, or customer record modifications - without unnecessary delays.

For systems unable to support true real-time streaming, micro-batching provides a practical alternative. This method processes data in small batches every few minutes, offering a near-real-time experience. A unified data layer can further streamline integration by automatically converting different schemas into a consistent format, eliminating the need for custom transformation code. This becomes especially critical as organizations adopt agentic AI, where autonomous agents require direct, real-time access to production systems and audit trails[5].

AI-powered monitoring tools also play a vital role. They can track token expirations, schema changes, and latency spikes, flagging issues like renamed database fields or API rate limit violations as they happen. Small Language Models (SLMs) can manage simpler tasks such as classification, data validation, and extraction, reducing the energy demands and costs associated with larger models while maintaining speed. Automated reasoning checks can catch inconsistencies quickly, ensuring outdated data doesn’t lead to incorrect actions.

Modern agentic workflows demand a new level of observability. Unlike traditional systems that focused on output quality, these workflows require action-based monitoring - tracking tool usage, state changes, and decision-making paths. This helps prevent "agentic drift", where an AI agent keeps executing tasks but gradually deviates from its intended goal as tools and contexts change. Treating APIs as products - with clear contracts, versioning, and automated tests - ensures stability in the execution layer, even as AI agents operate at machine speed.

"Integration is no longer an IT backend concern; it's a frontline enabler of business value." – AIJ Thought Leader[1]

Real-time data integration is more than just a technical challenge - it's the backbone of AI systems that don’t just provide insights but take decisive action. Companies that prioritize streaming architectures, proactive monitoring, and automated quality checks will be better equipped to meet the demands of fast-moving markets.

Challenge 7: Data Governance and Quality

Ensuring proper data governance and maintaining high data quality have become critical as AI systems evolve to support autonomous decision-making. These challenges build on earlier issues like data integration and transformation but come with their own complexities.

Poor data quality can cripple AI systems, causing errors that ripple through interconnected processes. For instance, when upstream systems rename fields or add columns without notice, downstream AI processes can fail without immediate detection. This issue is further complicated by regulations like the EU AI Act, which mandates traceability-by-design by August 2026 [5]. A survey of 1,000 practitioners shows that while security is the top concern for AI agent deployment, data governance is a close second, underscoring its importance [4].

Semantic conflicts are another hurdle. Different departments may define the same term differently - take "customer", for example. One team might exclude prospects from their definition, while another might include them. Without a unified data model, AI systems trained on inconsistent definitions produce unreliable results. Legacy systems, with their outdated APIs and hardcoded ETL scripts, add to the governance challenges by making consistent policy enforcement difficult.

The shift from basic chatbots to autonomous agents has raised the stakes. Unlike chatbots, these agents don't just answer questions - they perform tasks that can directly affect production systems. Over time, as tools and contexts evolve, agents may drift from their intended goals. Without measurable outcomes and clearly defined policies, this drift creates vulnerabilities [5]. Adding to the complexity, 86% of enterprises report the need for major tech stack upgrades to deploy AI agents effectively [4], widening the governance gap even further.

Fix: AI-Driven Quality Frameworks

One solution lies in transforming governance from a manual process into an automated one. Policy-as-Code allows governance policies to be encoded as executable rules using tools like OPA (Open Policy Agent) or Rego. This approach replaces static approvals with dynamic, real-time checks that adapt as data flows through the system [3].

Automated profiling tools can catch data issues early, preventing them from reaching AI models. Adding data lineage tags at every stage of the pipeline creates an audit trail, supporting incident response and regulatory compliance. These measures align with the traceability requirements mentioned earlier [3].

For task optimization, Small Language Models (SLMs) can handle straightforward tasks like classification and validation efficiently, reducing energy costs. Larger models, better suited for nuanced reasoning, can then be reserved for more complex tasks. This division helps maintain governance without overloading resources [5].

Restricting tool permissions is another key step. By separating "read tools" from "write tools" and assigning different permission levels, organizations can prevent AI agents from escalating privileges. Every agent action should be authenticated and authorized through service accounts rather than shared keys, adding another layer of security [3].

To address semantic conflicts, a business glossary in a centralized data catalog can standardize key definitions. When terms like "customer" or "revenue" are universally understood, AI outputs become more consistent. Additionally, continuous authentication and drift detection frameworks can monitor when agents deviate from their intended goals [5]. Organizations that implement these frameworks report an average ROI of 171% on their AI investments [5].

Together, these measures not only improve data governance but also create a solid foundation for deploying reliable, scalable AI systems. They seamlessly integrate with broader solutions to support strategic AI initiatives.

Integrating AI Fixes for Better Outcomes

Bringing together the fixes mentioned earlier creates a solid base for transforming strategic analysis. By shifting from manual data wrangling to automated integration, teams can redirect their efforts toward generating meaningful insights instead of getting bogged down in tedious processes.

These integrated solutions are the backbone of real-time strategic analysis. For example, 41% of companies struggle with real-time data access, which hinders AI models from delivering timely insights [6]. Centralized data platforms, automated transformation rules, and a universal semantic layer help eliminate these bottlenecks, speeding up frameworks like SWOT analysis and Porter's Five Forces.

Take Fannie Mae's Treasury and Risk teams, for instance. In September 2025, they upgraded their reporting systems for over 1.5 million home loans. By centralizing data with a universal semantic layer and REST APIs, they removed the inefficiencies of manual collation and duplication. Sheel Ratan, Software Engineering Manager at Fannie Mae, highlighted the impact:

"With REST APIs and role-based governance, we can expose data products in real time - without losing control. That means faster access, better decisions, and a single version of the truth across applications" [10].

In the venture capital world, where 51% of financial services leaders cite integration challenges, unified data can be a game-changer [10]. Canadian non-prime lender goeasy tackled data inconsistencies in September 2025 by creating a Business Intelligence (BI) Center of Excellence. By standardizing KPIs through unified semantic logic, they achieved a 93% rate of governed reporting. This initiative supported over 2,200 active users and generated more than 150,000 views on a single governed intraday dashboard. Jide Adeoye, Director of Business Intelligence at goeasy, explained:

"We have one central definition for the particular KPI" [10].

Unified data doesn’t just streamline workflows - it enhances the analytical depth of strategic frameworks. On average, organizations see a 171% ROI from agentic AI investments by using Small Language Models for routine tasks and reserving larger models for complex reasoning, which cuts costs and reduces inference time [5]. Tools like StratEngineAI (https://stratengineai.com) apply these principles, seamlessly integrating over 20 strategic frameworks with high-quality, unified data feeds.

When data is clean, consistent, and accessible, strategic frameworks deliver reliable results. Consultants can craft detailed briefs with market analysis and competitive intelligence. Venture capitalists can automate pitch deck reviews and produce traceable investment memos. The heavy lifting is done in minutes, not weeks, without sacrificing analytical rigor. This kind of integration removes friction and accelerates both strategic planning and due diligence, making high-quality analysis the new norm.

Conclusion

Data integration plays a key role in determining whether your AI strategy drives meaningful outcomes or stalls in endless pilot phases [1][3]. Without unified and secure data, even advanced AI models can falter, producing unreliable or incomplete insights. As AI Journal aptly puts it:

"True enterprise AI doesn't start with the model - it starts with the data. More specifically, it starts with making that data accessible, clean, secure, and ready for analysis." [1]

When data challenges aren’t addressed, AI agents lack the full business context they need, resulting in fragmented insights and slower decision-making [4]. Solving these issues allows businesses to replace inconsistent reporting and delays with agile, confident choices.

As autonomous agents become more prevalent, data integration becomes even more essential. By 2026, an estimated 40% of enterprise applications will include task-specific agents that rely on real-time data access, strong governance, and audit trails to meet regulatory demands [5]. These capabilities enable quicker, more accurate decisions, ensuring seamless end-to-end integration.

For companies that tackle these integration hurdles, the rewards are clear - an average ROI of 171% on agentic AI investments [5]. This improvement comes from cutting down the 80% of engineering time typically spent on creating custom connectors, freeing up resources for innovation [4]. Unified and well-governed data allows organizations to achieve consistent results, helping consultants draft in-depth briefs more quickly and enabling investors to speed up deal flow without compromising on analysis. Integration is more than just connecting systems; it’s about unlocking the full potential of AI to transform decision-making.

StratEngineAI (https://stratengineai.com) supports strategy consultants and venture capital investors in making fast, well-informed decisions.

FAQs

Where should we start fixing AI data integration?

To tackle fragmented and disconnected enterprise data systems, the first step is creating a unified data integration framework. This framework ensures smooth access to well-organized, high-quality data from various sources, including legacy systems, cloud applications, and third-party databases. Start by pinpointing and linking these scattered data sources. Then, focus on standardizing processes to maintain consistency and address technical hurdles, like custom APIs and security protocols. Building this solid groundwork is key to developing scalable and dependable AI solutions.

How do we prevent schema drift from breaking AI?

To keep schema drift from throwing AI systems off track, it's essential to have tools in place to automatically detect changes. These tools can spot updates like new columns or changes in data types and notify your team right away. Alongside this, adopt solid schema governance practices. This means regularly monitoring your schema and managing changes in a controlled way. Features like auto-evolving schemas for minor, safe updates and continuous validation play a key role in preserving data integrity. These steps help keep AI models stable and reliable, even when schema adjustments occur.

What governance is required for audit-ready AI?

Audit-ready AI governance depends heavily on strong data management, security measures, and compliance practices to uphold transparency and accountability. To achieve this, organizations need to:

Set clear data quality standards to ensure accuracy and reliability.

Implement strict access controls to protect sensitive information.

Maintain audit trails to track data usage and decision-making processes.

It's also essential to comply with privacy laws and data residency regulations to avoid legal pitfalls. Companies should thoroughly document every stage of their AI models' lifecycle, including details about data sources, decision logic, and the steps involved in model development, validation, and deployment. Automated monitoring tools can play a key role in staying compliant and identifying potential risks before they escalate.