AI-Powered Predictive Models: Lessons from Case Studies

Lessons from real-world AI predictive models on reducing fraud, preventing churn, and scaling—focus on data quality, testing, and cross-team governance.

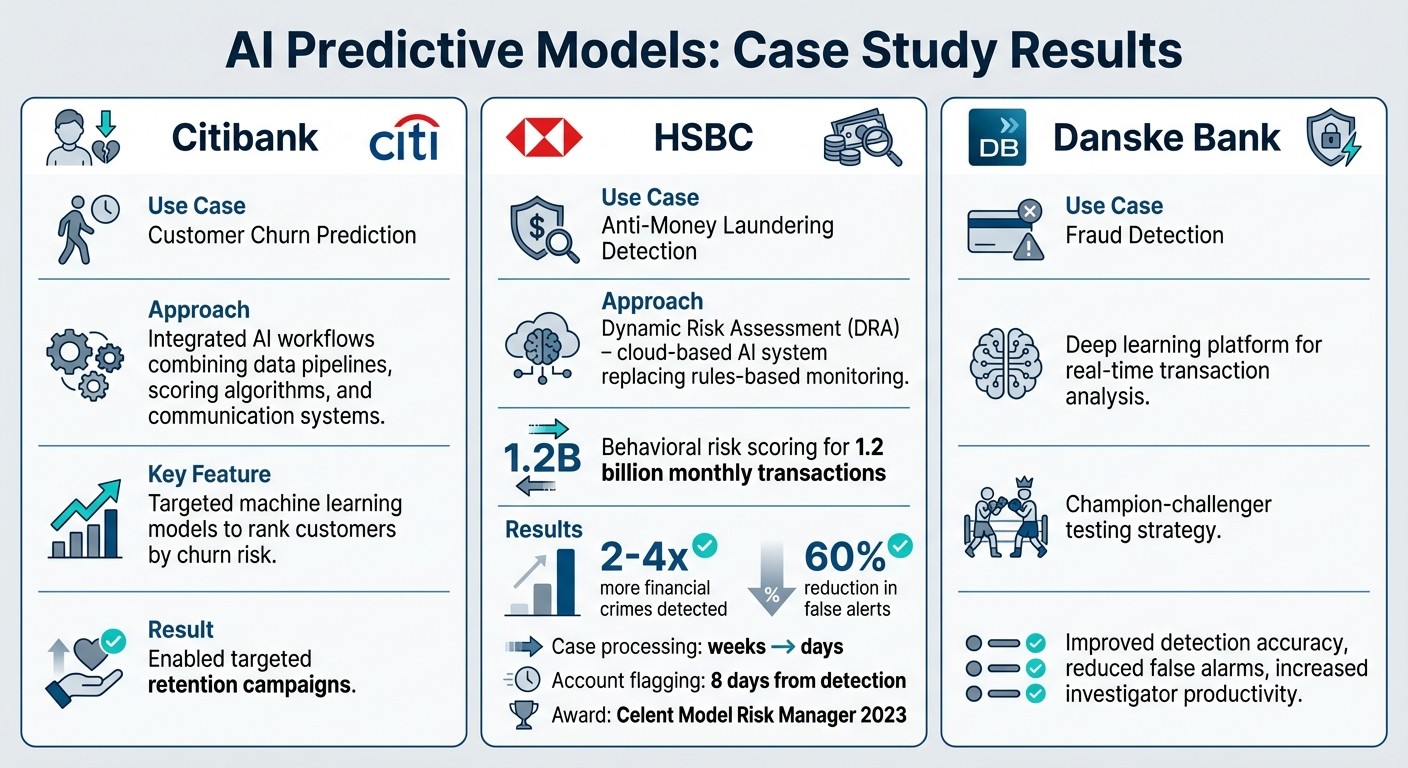

AI predictive models are reshaping industries by turning data into actionable insights. From reducing fraud to improving efficiency, companies like Citibank, HSBC, and Danske Bank demonstrate how these tools solve complex challenges. However, success depends on clean data, clear objectives, and team collaboration - not just technology.

Key takeaways:

Citibank: Used AI to predict customer churn, enabling targeted retention campaigns.

HSBC: Implemented AI for anti-money laundering, flagging 2–4x more crimes while cutting false alerts by 60%.

Danske Bank: Adopted deep learning to improve fraud detection and reduce false alarms.

Key principles for success:

Invest in high-quality data and scalable systems.

Refine variables and test models rigorously.

Align IT, business, and compliance teams early.

Challenges like data quality, integration, and ethics require attention. Companies that approach AI strategically see measurable benefits, from cost savings to faster decision-making. Start small, focus on high-impact areas, and ensure governance is part of the process.

A Gooder AI case study and demo: profiting on machine learning

Case Studies: AI Predictive Models in Action

AI Predictive Models Case Study Results: Citibank, HSBC, and Danske Bank

Three financial giants - Citibank, HSBC, and Danske Bank - showcase how predictive AI can deliver measurable results. Each example highlights specific operational changes that provide valuable insights for leveraging AI-driven predictive models effectively.

Citibank: Predicting and Preventing Customer Churn

Citibank zeroed in on a critical challenge: identifying customers likely to close their accounts. Instead of a blanket approach, the bank developed targeted machine learning models to rank customers by their churn risk and initiate tailored retention campaigns [2].

This effort relied on integrated AI workflows that combined data pipelines, scoring algorithms, and communication systems to track performance from start to finish [2]. By tying predictions directly to actions, Citibank utilized its robust data infrastructure and regulatory risk frameworks to ensure seamless implementation [2].

HSBC: Enhancing Anti-Money Laundering Detection

In 2021, HSBC introduced "Dynamic Risk Assessment" (DRA) in the UK and Hong Kong, a cloud-based system that replaced traditional rules-based monitoring with Google Cloud's AI for anti-money laundering (AML). Spearheaded by Jennifer Calvery, Group Head of Financial Crime, this initiative tackled a massive operational challenge: processing up to 1.2 billion transactions monthly [6][7].

The AI system used behavioral risk scoring to identify rapid fund transfers and unusual activity patterns, eliminating the need for manually set rules [7][8]. By late 2023, the results were impressive: the system flagged 2–4 times more financial crimes, reduced false alerts by 60%, and cut case processing times from weeks to days [6][7][8]. Suspicious accounts could now be flagged within just 8 days of detection [7].

"We're finding two to four times more financial crime than we did previously, with much greater accuracy... Now, we have 60% fewer false positive cases."

– Jennifer Calvery, Group Head of Financial Crime, HSBC [6]

The efficiency improvements were echoed by Richard D. May, Group Head of Financial Crime for Global Banking & Markets and Commercial Banking:

"The speed of AML AI and its ability to generate more accurate alerts means we no longer need to spend so much time investigating false positives." [7]

This groundbreaking approach earned HSBC the Celent Model Risk Manager of the Year 2023 award [7][9].

Danske Bank: Fraud Detection with Deep Learning

Danske Bank transitioned from its traditional rules-based fraud detection system to a deep learning platform capable of analyzing massive transaction data in real time [4]. The bank employed a "champion-challenger" testing strategy, running new AI models alongside existing systems to validate their effectiveness before fully rolling them out [4].

The deep learning approach uncovered subtle fraud patterns that older systems often missed, significantly improving detection accuracy. By reducing false alarms, investigators could focus on genuine threats, boosting productivity and effectiveness [4].

These examples highlight how predictive AI can transform operations, offering actionable takeaways for others looking to implement similar systems.

Lessons from the Case Studies

Looking at examples from Citibank, HSBC, and Danske Bank, three key principles stand out. Companies that embrace these principles see measurable benefits, while those that ignore them often miss out on significant cost savings [4].

Building on Clean Data and Scalable Systems

Bad data leads to bad results - it's that simple. Walmart's logistics AI is a prime example of how clean data pays off, saving the company $75 million in just one fiscal year [4]. Shell offers another impressive case: its predictive maintenance system processes a staggering 20 billion sensor readings every week, generating 15 million daily predictions. This was only possible because they treated data as a strategic asset and built scalable systems to handle it. These systems not only support early-stage projects but also enable long-term growth. With this solid foundation, teams can collaborate effectively and measure outcomes with precision.

Refining Variables and Model Parameters

Getting clear on what you're measuring makes all the difference. For instance, RNDC, a wine distributor, cut forecasting errors by 20% by developing semantic models that clarified key business terms [10]. Without this clarity, AI systems can produce results that seem plausible but are ultimately inaccurate, undermining trust. Systematic testing is another critical step. Consumer Reports used rigorous red-teaming to improve safety guardrails, while Amerit Fleet slashed error detection time by 90% and automated 30% of repair orders [4]. These examples highlight the importance of refining both variables and model parameters to achieve reliable outcomes.

Coordinating Across Teams for Better Results

Even the best technical solutions won't succeed if users don't adopt them. Multidisciplinary collaboration is essential, as Stefan Spiegel, CFO of SBB Cargo AG, points out:

"All these models can only be developed and put into operation if the specialists in the triangle of IT, mathematical modelling, and business work closely together." [11]

At SBB Cargo, involving train drivers in the design process transformed an 80–90% accurate forecast into a highly effective operational tool by incorporating their route-specific expertise. Similarly, Leden Group doubled its viable AI use cases - from 25 to 50 - by engaging subject matter experts early on. This collaboration helped identify bottlenecks and prioritize initiatives with the most impact, something data scientists alone might have missed. Most AI failures aren't due to poor models but to shortcomings in processes, team coordination, and governance [2]. Involving compliance, legal, and security teams from the start ensures AI solutions address real-world business needs rather than remaining theoretical concepts.

Common Problems and Solutions

Even with strong foundations and collaborative teams, deploying predictive models can still hit snags. These technical challenges often create a gap between early pilot success and consistent production reliability. The most common obstacles include data quality issues, integration difficulties, and ethical concerns. Tackling these head-on can determine whether a model delivers measurable results or falls short. Let’s explore practical solutions for these challenges.

Addressing Data Quality and Bias

Poor data can magnify problems on a larger scale. Take the example of Consumer Reports, which launched "AskCR" in February 2026. Their goal was to make 90 years of product reviews easily accessible while avoiding AI-generated inaccuracies. Partnering with NineTwoThree, they used a retrieval-augmented generation (RAG) architecture to structure decades of ratings and articles into a vector database. By rigorously testing edge cases, they created a system with a 10x improvement in safety scores, ensuring it only referenced verified products from their trusted database [4].

The key takeaway? Treat data as a strategic priority from the start. Amerit Fleet applied this principle by deploying a custom AI model in February 2026 to analyze repair orders for billing errors. They set clear confidence thresholds to determine when the system could act independently and when human oversight was needed. This approach sped up error detection by 90% and auto-resolved 30% of repair orders, all while providing transparent reasons for any flagged issues. This transparency built trust among users [4].

Scaling Models and System Integration

Scaling a model from a pilot phase to full deployment often reveals hidden technical challenges. Shell's predictive maintenance platform illustrates this: by 2022, it monitored over 10,000 assets, processed 20 billion sensor readings weekly, and ran 11,000 models to generate 15 million daily predictions [4]. Achieving this scale required a modular design and a robust cloud infrastructure to handle massive data growth.

The secret to smoother integration lies in layering AI over existing systems rather than replacing them entirely. This method connects AI with platforms like CRM, ERP, and HRIS, reducing friction and allowing teams to deliver results quickly. This is critical when, despite 72% of companies adopting at least one AI capability, only 23% report meaningful cost savings [4]. By streamlining integration, organizations can move faster from insights to actionable strategies.

Maintaining Ethical AI Practices

Bias in data can lead to failures, especially in sensitive areas like hiring, lending, and healthcare [2]. The solution starts with involving legal, compliance, and security teams from the beginning, rather than treating governance as an afterthought. As Sajli from Worqlo aptly states:

"Governance enables scale. It does not prevent it." [5]

Embedding ethical safeguards helps ensure AI systems remain trustworthy and effective. Best practices include incorporating human oversight for critical decisions, regularly testing for demographic biases, and maintaining detailed audit trails for explainability. For example, BMW implemented AI-powered computer vision in its assembly lines by 2025. This shift moved quality control from reactive fixes to predictive measures, cutting vehicle defects by 60%. Importantly, the AI supported human inspectors instead of replacing them [4]. These ethical frameworks not only enhance reliability but also turn predictive models into long-term strategic tools.

Conclusion: Applying Predictive Models to Strategy

Case studies clearly show that when AI predictive models are treated as strategic tools, they deliver measurable results. These examples highlight a shift from relying on outdated, periodic strategic reviews to embracing real-time, continuous intelligence [3].

However, despite the growing use of AI, many companies struggle to see major cost savings. By 2025, 72% of enterprises are expected to have adopted at least one AI capability, yet only 23% report achieving substantial savings [4]. This gap often comes down to common missteps: treating AI as just another software tool rather than a shift in operating models, focusing on how often AI is used rather than its actual business impact, and waiting too long to involve governance, which often leads to stalled deployments [5]. As W. Chan Kim from INSEAD puts it:

"Technologies, whether existing or new, are tools that enable and advance strategic objectives, but they are not a substitute for strategy itself" [1].

To bridge this gap, a focused and step-by-step approach to AI implementation is critical. The first step? Tackle high-pain areas - problems like strategic misalignment, delays in execution, or recurring errors [3]. Research shows that companies that use AI to dynamically manage resources are 2.4 times more likely to achieve long-term success [3]. Start small with narrow, well-defined use cases tied to clear goals like cutting costs, saving time, or reducing errors. Run these models alongside current processes to validate their effectiveness before making a full transition [2].

Predictive planning can increase efficiency in scenario modeling and resource optimization by 40–60%, but only when organizations allocate resources wisely. A balanced approach - 70% for people, 20% for technology, and 10% for algorithms - ensures success [12]. This investment should also include building AI literacy among employees, enabling them to question AI recommendations and understand how the models work. This keeps institutional knowledge strong and ensures decisions are well-informed [12].

The organizations that succeed with AI prioritize strategic data use and amplify human judgment. By applying these lessons, predictive models can evolve from being just another tech initiative to becoming powerful tools for faster, smarter decision-making. With the right approach, these models can drive agility and informed strategies that truly deliver results.

FAQs

What data is needed before building a predictive model?

To create a predictive model, the first step is collecting reliable, relevant historical data that aligns with the problem you're tackling. This data needs to be well-rounded, cleaned up, and put into context to help uncover meaningful patterns and trends. Key tasks include preprocessing steps like dealing with missing data, normalizing values, and applying feature engineering. If necessary, pull in data from multiple sources to enrich your dataset. Remember, the success of your model hinges on how well this initial data is prepared.

How can I prove an AI model outperforms my current rules-based process?

To highlight how an AI model outperforms traditional methods, it's crucial to focus on measurable factors like accuracy, speed, or cost efficiency. Start by running the AI model side-by-side with your existing rules-based process, using the exact same data set. This ensures a fair comparison.

Track the results over time to pinpoint specific improvements. For example, does the AI offer higher accuracy in predictions? Does it make faster decisions, saving valuable time?

To make your findings clear and convincing, use visualizations like graphs or charts alongside a detailed analysis. This approach not only makes the data easier to understand but also builds trust in the AI model’s ability to deliver better results.

How can I prevent bias and ensure compliance with predictive AI?

To ensure fairness and compliance, it's crucial to regularly audit your training data. This helps confirm that the data is both representative and free from bias. Additionally, updating datasets on a consistent basis can minimize model drift, keeping your AI systems accurate and relevant.

Bias audits are another essential step. By performing these reviews and examining vendor-provided bias documentation, you can better identify and manage potential risks. These practices not only support ethical AI use but also align with governance standards, ensuring your AI-driven decisions are both responsible and balanced.